I Built an AI That Hacks Laravel Apps in 50 Seconds. Here's What It Found That PHPStan and Psalm Missed.

I've been building Laravel applications for years. Like most developers, I relied on PHPStan and Psalm to catch bugs before they hit production. They're great tools — they find type errors, undefined methods, dead code. But here's what I discovered when I started thinking about security: they don't think like attackers.

So I built one that does.

Laravel Hack Auditor is an AI-powered security scanner for Laravel. You install it with Composer, run one command, and an AI model analyzes your code the way a penetration tester would — tracing user input through your application, checking if authorization is actually enforced, and finding the logic flaws that static analysis tools fundamentally cannot detect.

This is the story of how it works, what it catches, and why the results surprised me.

The Problem: SAST Tools Don't Think About Exploitability

Static Application Security Testing (SAST) tools like PHPStan and Psalm work by analyzing code structure. They parse your AST, track types, and flag patterns that match known vulnerability signatures. This approach is powerful for a specific class of bugs — but it has a fundamental blind spot.

They can't reason about application logic.

Consider this Laravel controller:

public function show($id)

{

$order = Order::findOrFail($id);

return view('orders.show', compact('order'));

}

PHPStan sees a method that accepts a parameter, calls an Eloquent method, and returns a view. Everything type-checks. Psalm's taint analysis sees no user input flowing into a dangerous sink.

But a security researcher sees an Insecure Direct Object Reference (IDOR) — any authenticated user can view any order by changing the ID in the URL. There's no authorization check. No $this->authorize('view', $order). No policy. No gate.

This isn't a type error. It's not a taint flow. It's a missing security control, and it requires understanding what the code should do, not just what it does.

That's the gap I set out to close.

What Laravel Hack Auditor Actually Does

At its core, the package sends your code to an AI model (Anthropic's Claude, OpenAI's GPT, or Google's Gemini) with a carefully engineered prompt that makes the AI think like a penetration tester.

But it's not just "send code to AI and hope for the best." The architecture has multiple layers designed to maximize accuracy and minimize false positives:

The Pipeline

FileCollector → CodeExtractor → ContextCollector →

PromptBuilder → AI → ResponseParser → Filters → Output

FileCollector gathers the PHP files to scan — controllers, models, routes, middleware, form requests. It supports full scans, path-specific scans, and git diff scans (only files changed since the last commit).

CodeExtractor reads each file and cleans it for analysis — removing comments that would waste tokens, detecting file types, and extracting the essential code structure.

ContextCollector is where things get interesting. Before the AI ever sees your code, the scanner collects application context — your route definitions, middleware assignments, model $fillable/$guarded arrays, FormRequest validation rules, and Gate/Policy registrations. This context gets injected alongside the code so the AI can reason about whether authorization is actually enforced, whether mass assignment is protected, and whether input is validated.

PromptBuilder constructs a system prompt with over 40 framework-aware rules. It tells the AI what Laravel patterns are safe (e.g., Eloquent parameterized queries, $request->validated()), what patterns are dangerous (e.g., DB::raw() with user input, {!! !!} in Blade), and how to perform structured taint analysis.

ResponseParser takes the AI's JSON response and applies programmatic filters — self-contradiction detection (the AI flags something but then describes why it's safe in the description), broken taint trace filtering (the source is config(), not user input), and baseline comparison (skip findings from the last scan).

Taint Trace Protocol

Starting in v1.5, every injection-type finding (SQL injection, XSS, command injection, open redirect) must include a taint_trace field:

SOURCE: $request->input('q') → TRANSFORMS: trim() → SINK: DB::raw("...{$q}...")

The ResponseParser programmatically validates these traces. If the source is safe (like config() or Auth::user()->id), the finding is auto-dropped. If a chain-breaking transform exists (like (int) cast before a SQL sink, or htmlspecialchars() before HTML output), it's auto-dropped.

This forces the AI to show its reasoning, and lets the parser catch hallucinated vulnerabilities mechanically.

Framework-Aware Rules

The prompt includes explicit "never flag" rules for common Laravel patterns that less sophisticated scanners trip on:

$request->validated()or$request->safe()→ mass assignment is safe$this->authorize()orGate::allows()in the controller → authorization exists- Eloquent query builder methods → parameterized by default

{{ $variable }}in Blade → auto-escapedHash::make()/Hash::check()→ proper password handling- Route model binding with policies → implicit authorization

These rules eliminate the most common false positives that make developers distrust security scanners.

The Proof: Testing Against a Known-Vulnerable App

To validate the scanner, I built laravel-vuln-lab — an intentionally vulnerable Laravel 13 application with 8 known security flaws:

- SQL Injection — raw

DB::select()with user input - Stored XSS —

{!! $comment !!}rendering unescaped input - Broken Authentication — hardcoded admin bypass

- IDOR —

/profile/{id}with no authorization - Command Injection —

shell_exec("ping " . $input) - Mass Assignment —

$request->all()with no$fillable - Sensitive Data Exposure — public

/debugroute dumping config - Broken Access Control — admin route with commented-out middleware

Then I ran every major PHP security tool against it.

The Results

Psalm's taint analysis — the tool specifically designed to find injection vulnerabilities — returned "No errors found!" on an application with SQL injection, XSS, and command injection sitting in plain sight.

PHPStan found 14 errors, but all of them were type mismatches (View vs Contracts\View\View) and undefined static methods (Eloquent magic). Zero security findings.

The PHP Security Checker found nothing because the vulnerability isn't in the dependencies — it's in the application code.

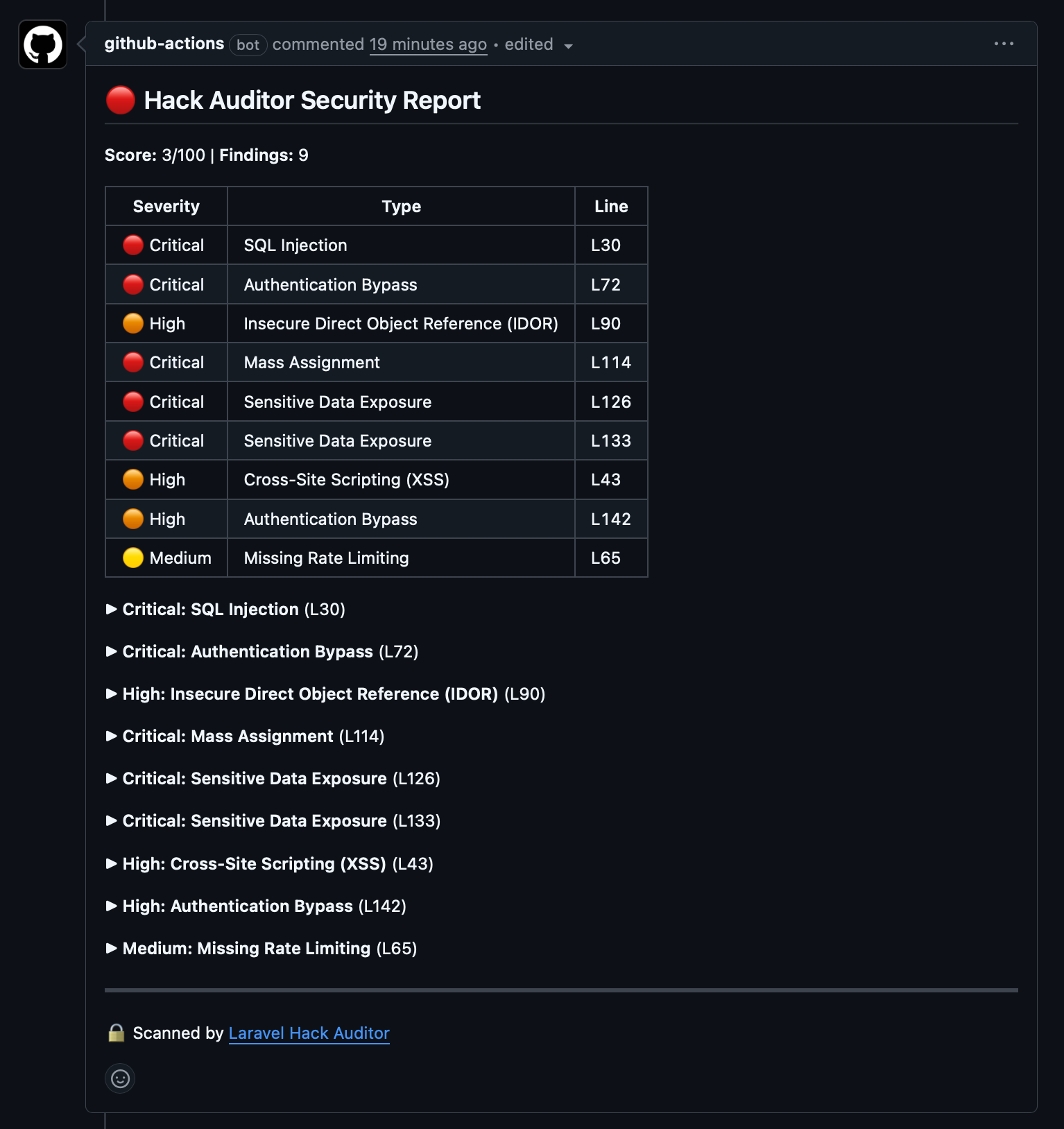

Hack Auditor found 9 issues in 31 seconds, at a cost of $0.07 in API tokens. Every finding included the OWASP category, a description of why it's exploitable, the evidence (exact code), and a copy-paste fix.

Why Traditional SAST Fails Here

The answer is fundamental, not incidental. Static analysis tools operate on code structure — they parse ASTs, track types through call graphs, and match patterns. They're excellent at finding:

- Type mismatches

- Null pointer dereferences

- Simple taint flows (user input → dangerous function with no sanitization in between)

But they cannot find:

- Missing controls — code that should exist but doesn't (like a missing

authorize()call) - Logic flaws — code that executes correctly but implements the wrong security policy

- Context-dependent vulnerabilities — code that's safe in one context but dangerous in another

An AI model, given the right context and prompt engineering, can reason about these. It understands that a controller method accessing another user's data without a policy check is a vulnerability — not because of a code pattern, but because of a missing code pattern.

How to Use It

Installation

composer require mahdisphp/laravel-hack-auditor --dev

php artisan install:ai

Configure your AI provider in .env:

HACK_AUDITOR_AI_PROVIDER=anthropic

HACK_AUDITOR_AI_MODEL=claude-sonnet-4-6

ANTHROPIC_API_KEY=your-key

Quick Demo

php artisan hack:demo

This scans your app with a single AI request and shows findings in a formatted table — complete with severity badges, OWASP categories, and suggested fixes. It's the fastest way to see what the scanner finds.

Full Scan

php artisan hack:scan

This runs a comprehensive scan across all configured paths (controllers, models, routes, middleware, form requests). Findings are displayed in a detailed report with a security score from 0–100.

Output Formats

# JSON for CI/CD integration

php artisan hack:scan --json

# HTML report

php artisan hack:scan --format=html --output=security-report.html

# Only scan files changed since last commit

php artisan hack:scan --diff

# Specific file or directory

php artisan hack:scan --path=app/Http/Controllers/PaymentController.php

CI/CD with GitHub Actions

There's a dedicated GitHub Action that runs the scan on every pull request and posts findings as inline comments:

- uses: mahdi-salmanzade/laravel-hack-auditor-action@v1

with:

api-key: ${{ secrets.ANTHROPIC_API_KEY }}

It scans only the files changed in the PR, posts a summary comment with a severity table, and adds inline annotations on the exact vulnerable lines in the "Files changed" tab. You can set a fail-on threshold to block PRs with critical or high findings.

Architecture Decisions

Why AI and Not Rules?

Rule-based scanners (like Semgrep or custom PHPStan rules) work well for known patterns. If you know the exact code pattern that constitutes a vulnerability, you can write a regex or AST matcher for it.

The problem is that security vulnerabilities in web applications are contextual. DB::raw() isn't always dangerous — it depends on what's inside it. A missing authorize() call isn't always a vulnerability — it depends on whether the route is public or protected by middleware.

AI models can reason about this context in ways that pattern matchers cannot. The tradeoff is cost (API tokens) and non-determinism (the AI might miss things or hallucinate). The taint trace protocol and programmatic filters exist to manage the non-determinism.

Why Three Providers?

The scanner supports Anthropic (Claude), OpenAI (GPT), and Google (Gemini) through Laravel's AI driver. Different teams have different provider preferences and budget constraints. Claude tends to produce the most detailed findings with fewer false positives. GPT-4o is faster and cheaper. Gemini is the budget option.

Why Not a Standalone Tool?

Hack Auditor is a Laravel package, not a standalone binary. This is intentional — it uses Laravel's service container, config system, and Artisan console to integrate deeply with the application it's scanning. It reads your actual route definitions, middleware stack, and model configurations at runtime. A standalone tool would have to parse these from source code, which is significantly harder and less accurate.

What's Next

The scanner is at v1.5 with 319 passing tests, taint trace analysis, and framework-aware rules. The next major feature is a verification pass — after the initial scan finds candidate vulnerabilities, a second AI call reviews each HIGH+ finding with the full application context and asks: "Can you construct a concrete exploit for this? If not, dismiss it."

This should significantly reduce false positives for the remaining edge cases where the first pass flags something that looks dangerous but isn't exploitable given the full context.

The package is open source and MIT licensed. If you're building Laravel applications and care about security, try it:

composer require mahdisphp/laravel-hack-auditor --dev

php artisan hack:demo

It takes 15 seconds. You might be surprised by what it finds.

Links:

- Laravel Hack Auditor — the package

- GitHub Action — CI/CD integration

- laravel-vuln-lab — the test repo with comparison data